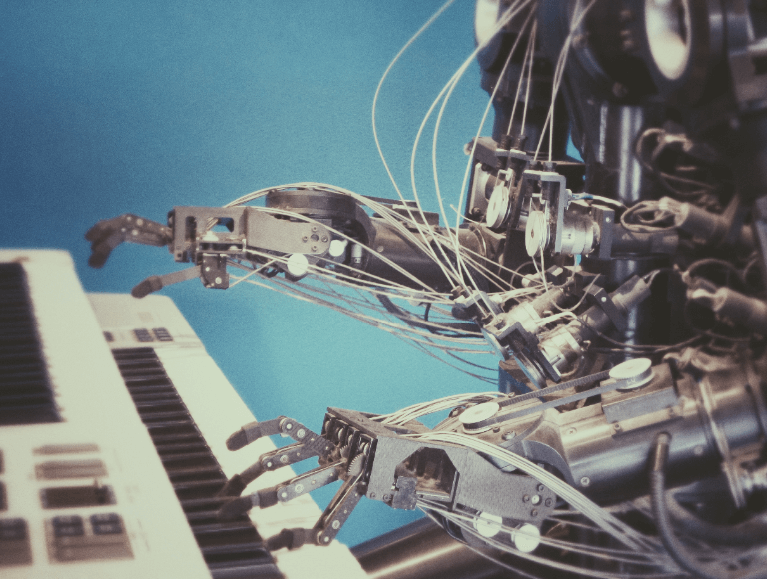

First there was “Software is Eating the World”

In 2011, Marc Andreeseen famously identified the writing on the wall about industry changes underway at the time. His quote of “Software is Eating The World” summed things up concisely: Businesses were becoming, and needed to become, software companies if they were going to compete successfully in the future with the likes of Amazon and other rapidly growing companies. This prompted many companies to embark on Digital Transformation initiatives to meet the challenge of a hyper-competitive / hyper-efficient business environment. The efforts mostly focused on consolidating enterprise-wide data in Data Lakes in the hopes of applying analysis and gaining new insights from their data. However, acting on, and improving business with these data and insights usually required subsequent human labor. What was missing was the immediate, timely, and automated application of the insights to business.

Now “AI is Eating Software”

There is now new writing on the wall that businesses need to prepare for. Instead of logic that is hand-crafted by armies of software developers, competitive companies are using Big Data to “train” Machine Learning and AI algorithms to automatically detect patterns in the data and then automatically classify and predict outcomes from them. Furthermore, these automated “decisions” are being used to drive automated systems and cutting out the human friction that previously constrained business responses. Data and the automated insights are now actionable.

Since 2011, a number of factors have converged to make this possible:

- Massive increases in compute power, especially the massively-parallel processing of Graphical Processing Units (GPUs)

- Exponential growth in training datasets, including multi-million record community-built open source ones

- ML / AI frameworks, especially Open Source ones

- Tremendous reductions in cost for compute, storage, and software, especially for on-demand resources available in the Cloud.

Increasingly, thanks to abundant and sometimes over-hyped press coverage, businesses are becoming aware of the power and potential of ML and AI. Coverage of breakthroughs like human-level image and video recognition; near-human level speech recognition; natural-language processing (NLU); automated language translation; conversational appliances like Alexa, and Chatbots; self-driving automobiles and semi-trucks; and even better-than-human performance on games where human world champions are defeated.

But many of these impressive accomplishments represent cutting-edge advanced ML / AI research, and much of it serving the primary purpose of creating academic papers by researchers. Indeed, the current rate of new AI research paper publication is on the order of 70 papers per day! Even the researchers are struggling to keep up with the advancements.

What Can A Business Do In This New World?

While the field of ML / AI is rapidly advancing, much of this is on the cutting edge research front. So what can a business do if it is interested in extending digital transformation to include ML / AI capabilities? Well, it turns out that in parallel with the exciting advances described above, there are many practical and business-relevant ML / AI capabilities that have now been productionized and made usable for nearly all businesses. So rather than considering ML/AI as some kind of end in and of itself (like the academic efforts), these technologies can now be included as yet another tool to be folded into digital transformations of businesses.

The remainder of this blog post provides practical advice to such businesses.

Tip #1: First, Get Your Big Data House in Order

While I was at AWS, I engaged with literally hundreds of AWS customers as a Sr. Specialist SA for ML/AI, and prior to that, as a Sr. Specialist SA for Big Data. In that time, I observed many customers struggling to extend their digital transformations with ML/AI. The tendency for too many customers has been to pursue ML/AI as a technology-first concern, even before getting their Big Data House in order, or understanding a real business need.

In order to leverage ML at scale and in a way that has real business impact, it is critical that the business actually has Big Data relevant to the business needs, and that the data lives in a Data Lake on AWS. These are prerequisites for business-worthy ML because training an ML model requires LOTS of training examples, and proximity of the ML training and inferencing compute with the big data. This is especially true for Deep Learning which, although it can achieve higher accuracies, requires much more training data than other types of ML like Gradient-Boosted Trees (XGBoost), linear regression, factorization machines, etc. AWS’ SageMaker ML service, a major platform for ML, is designed to train models using data in the AWS S3 Data Lake, and to use the trained models to do inferencing on bulk data in the Data Lake in batch mode, as well as serve individual inferencing requests from client applications. Without your Big Data in the AWS S3 Cloud, a powerful ML platform like SageMaker won’t help your business.

Tip #2: Focus On A Business Need Rather Than On The Technology

All too often, businesses interested in tapping into ML / AI identify a technology first, and then they undertake an evaluation of the chosen technology with something small and contrived. You should avoid small ML/AI POCs, pilots, and experiments that focus first on the technology — these rarely translate into business enhancing transformations. Instead, find a business problem for which solution with ML/AI could have a real ROI. An analogy I like to use to make this point is the following: nobody opens up their home tool-box, selects a tool, and THEN asks “what kinds of things can I use this tool for?” On the contrary, you start with a real home problem you need to fix, and THEN you select the best tool to use. Remember ML/AI is just another tool, not an end in and of itself. Let the business problem/need/characteristics and opportunity drive the choice of the ML/AI technology. Different problems will benefit most from different appropriately-selected algorithms.

Tip #3: Start With Low-Hanging Fruit

In the “productionized” forms of ML/AI mentioned above, specifically on AWS, there are two main classes of solution that are available. These differ in the amount of, and type of, expertise needed to use.

The first class that I refer to as low-hanging fruit, allow individuals without ML expertise, and without large amounts of training data to nevertheless inject intelligence into existing legacy applications. These take the form of AI services (RESTful APIs) that already contain pre-trained ML models for common needs. AWS manages AI services including scaling, re-training while your application developers focus on connecting the legacy applications to the services for classifications and predictions.

There are several AWS AI services that can support Natural Language Understanding (NLU) on textual and document-oriented data. This includes Amazon Comprehend, Amazon Textract, Amazon Transcribe, Amazon Translate. Other services include Text-to-Speech service Amazon Polly, voice/text Chatbot service Amazon Lex; time series predictions with Amazon Forecast; a service like Amazon Personalize for predictions from real-time user activity (clicks, page views, signups, purchases) used identify the right product recommendations; and Video and Image analysis services Amazon Rekognition Image/Video; among others.

If you do have abundant training data and wish to train your own model, there is yet another low-hanging fruit. Amazon SageMaker is an alternative to taking on the work of deploying a bunch of ML servers (e.g. using DL AMIs on EC2). SageMaker is a fully managed service for ML that provides a host of “out-of-the-box” benefits like automation model training, automated hosting of a trained model for production inferencing, a long list of built-in algorithms, decoupling of training compute from inferencing compute, right-sized compute for the job, and auto-scaling of inferencing endpoints, among many other features. All of this without the need to configure, deploy, operate and scale a fleet of EC2 servers.

Tip #4: Tune Automatically

Take advantage of automated Hyperparameter Optimization (HPO) to find the optimal combination of hyperparameters for your model and needs, in the shortest amount of time. SageMaker includes auto tuning of hyperparameters by intelligently exploring combinations of hyperparameters that optimize for the training metric you care about (e.g. accuracy). Trying to find optimal hyperparameters through manual trial-and-error can be laborious and time-consuming. SageMaker automates this for you. Optimizing the hyperparameters for your model training can greatly improve its effectiveness.

Tip #5: Retrain Regularly

ML models often represent the patterns in data at a specific time — a snapshot. However, for business data that may change over time (think seasonal transactions, weather changes, trends), your model can drift and loose its predictive power. Therefore, it is important to use new and larger representative datasets in periodic retraining of the model to avoid this drift.

Tip # 6: Pick The Right Talent

ML models often represent the patterns in data at a specific time — a snapshot. However, for business data that may change over time (think seasonal transactions, weather changes, trends), your model can drift and loose its predictive power. Therefore, it is important to use new and larger representative datasets in periodic retraining of the model to avoid this drift.

Conclusion

So to recap…The business environment is now shifting from a software-driven one to an ML/AI-driven one.

While there are exciting and rapidly evolving academic achievements in AI that are hard to keep up with, there are also practical “productionized” ML/AI capabilities available today for businesses

We covered a number of tips that your business can apply as it extends its digital transformation to include ML/AI capabilities.

Northbay has the experienced ML/AI talent to help you take advantage of these powerful new capabilities as you continue your digital transformation.

About NorthBay Solutions

NorthBay Solutions is a leading provider of cutting-edge technology solutions, specializing in Agentic AI, Generative AI MSP, Generative AI, Cloud Migration, ML/AI, Data Lakes and Analytics, and Managed Services. As an AWS Premier Partner, we leverage the power of the cloud to deliver innovative and scalable solutions to clients across various industries, including Healthcare, Fintech, Logistics, Manufacturing, Retail, and Education.

Our commitment to AWS extends to our partnerships with industry-leading companies like CloudRail-IIOT, RiverMeadow, and Snowflake. These collaborations enable us to offer comprehensive and tailored solutions that seamlessly integrate with AWS services, providing our clients with the best possible value and flexibility.

With a global footprint spanning the NAMER (US & Canada), MEA (Kuwait, Qatar, UAE, KSA & Africa), Turkey, APAC (including Indonesia, Singapore, and Hong Kong), NorthBay Solutions is committed to providing exceptional service and support to businesses worldwide.